Critical Section Problem in Operating System (OS)

Critical Section Problem

Critical Section Problem in Operating System (OS) is a fundamental concept that is crucial for ensuring proper functioning of concurrent processes. The critical section problem is a classical computer science problem that arises in multi-threaded and multi-process systems. It deals with the issue of managing access to shared resources in a way that prevents conflicts and ensures data integrity.

In this section, we will delve deeper into understanding the critical section problem, its challenges, and the various solutions that have been proposed in operating system design to handle these issues efficiently.

Critical Section Problem in Operating system

Critical Section problem is a classic computer science problem in many banking systems, where there are many shared resources like memory, I/O etc. Concurrent access may override changes made by other process running in parallel.

We already know about Critical section of code from Process synchronization post. Critical section is a segment of code in which process changes common variable, updates file etc.

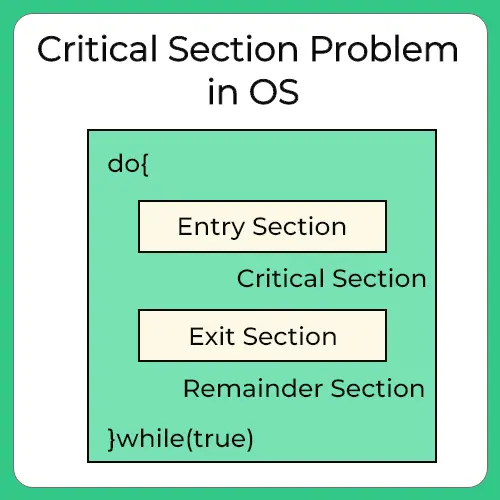

The Critical Section Problem is to design a protocol which processes use to cooperate .To enter into critical section, a process requests permission through entry section code. After critical section code is executed, then there is exit section code, to indicate the same.

Key Concepts:

- Critical Section: A critical section is a segment of code in which a process manipulates shared resources or variables. This is where the risk of interference from other processes is highest, and improper synchronization can lead to data corruption or unexpected behaviors.

- Concurrency: In a system with multiple processes or threads, concurrency refers to the ability of processes to run in parallel or in an interleaved manner. While this improves performance, it introduces the challenge of ensuring that processes do not interfere with each other when accessing shared resources.

- Shared Resources: These are resources (such as memory, files, or hardware devices) that are shared among multiple processes. Because these resources are used by more than one process simultaneously, proper management is necessary to avoid conflicts.

Prepare for Interview with us:

Constituents of Critical Section

The main blocks of process are –

- Entry Section – To enter the critical section code, a process must request permission. Entry Section code implements this request.

- Critical Section – This is the segment of code where process changes common variables, updates a table, writes to a file and so on. When 1 process is executing in its critical section, no other process is allowed to execute in its critical section.

- Exit Section – After the critical section is executed , this is followed by exit section code which marks the end of critical section code.

- Remainder Section – The remaining code of the process is known as remaining section.

Example of Negative Effect

When several threads (or processes) share data, running in parallel on different cores , then changes made by one process may override changes made by another process running parallel. Resulting in inconsistent data. So, this requires processes to be synchronized, handling system resources and processes to avoid such situation is known as Process Synchronization.

CLASSIC BANKING EXAMPLE –

- Consider your bank account has 5000$.

- You try to withdraw 4000$ using net banking and simultaneously try to withdraw via ATM too.

- For Net Banking at time t = 0ms bank checks you have 5000$ as balance and you’re trying to withdraw 4000$ which is lesser than your available balance. So, it lets you proceed further and at time t = 1ms it connects you to server to transfer the amount

- Imagine, for ATM at time t = 0.5ms bank checks your available balance which currently is 5000$ and thus let’s you enter ATM password and withdraw amount.

- At time t = 1.5 ms ATM dispenses the cash of 4000$ and at time t = 2 net banking transfer is complete of 4000$.

EFFECT ON THE SYSTEM

Now, due to concurrent access and processing time that computer takes in both ways you were able to withdraw 3000$ more than your balance. In total 8000$ were taken out and balance was just 5000$.

Critical Section Problem conditions

A critical solution problem satisfies 3 things –

- Mutual Exclusion must be preserved.

- Progress Requirement must be satisfied.

- Bounded waiting time requirement is met.

Let’s look at each of these in detail –

- Mutual Exclusion is preserved – If a process P1 is executing in its critical section, no other process can execute in critical section.

- Process or Progress Requirement must be satisfied – If no process is executing in its critical section, then the decision as to which process will go next into executing its critical section code is decided by only those process which are in entry section, that is, not before it. That is the process which are in remainder section can not take part in this decision. Also, this selection has to be made in certain time, it can’t be postponed indefinitely.

- Bounded Waiting time requirement is met – If a process A has made a request to enter its critical section, then there is a limit on the number of times , other processes go before into their critical section. So, waiting time, as a result is bonded. After a certain time, the process gets to be in critical Section, doesn’t wait indefinitely.

Advanced Topics

To handle critical section is Operating System, There are 2 ways –

- Preemptive Kernel

- Non Preemptive Kernel

- Preemptive Kernel

Allows process to be preempted while it’s running in kernel mode.

Can be prone to race around condition, if poorly designed. So, must be carefully designed .

Difficult to Design for SMP Architecture as in this environment, it’s possible for 2 Kernel -Mode processes to run simultaneously on 2 different processes.

It’s more responsible, as less risk for any process to run indefinitely.

Suitable for Real Time Programming.

What is Race Around Condition ?

A race around condition is a situation that can occur in the critical section problem when multiple processes or threads access and manipulate shared data concurrently, and the final outcome depends on the order in which the access occurs.

Eg – Consider a kernel data structure that maintains a list of all open files in system. List is modified if a new file is opened or closed. If 2 process simultaneously try to open files , it may separate updates to list leading to race around condition

Non Preemptive Kernel –

Does not allow a process running in Kernel Mode to be preempted, that is stopped. Only after the process exits the Kernel Mode by self, will the next process be given the kernel.

Free from Race Around Condition as only 1 process is executed at a time in kernel.

- The software based solution to critical section problem is Peterson’s Algorithm, Semaphores, Monitors etc

- We will discuss all of these in details in the future posts in the series.

- The hardware based solution to critical section is exclusive access to memory location, atomic swap etc.

To wrap up

The Critical Section Problem in Operating Systems is a crucial issue for managing access to shared resources in concurrent processes. It involves ensuring that when one process is executing in its critical section, no other process can interfere. Various methods like entry and exit sections are used to handle synchronization, ensuring mutual exclusion, progress, and bounded waiting. Solutions to the problem can be either software based (like Peterson’s Algorithm and Semaphores) or hardware-based, and these help prevent errors like data inconsistency and deadlocks in multi process systems.

FAQs

Mutual exclusion ensures that if one process is executing in its critical section, no other process can enter it, preventing conflicts or data corruption.

Bounded waiting ensures that once a process requests to enter its critical section, it will not have to wait indefinitely, limiting the number of processes that can access the critical section before it.

A race condition occurs when multiple processes access shared data simultaneously without proper synchronization, leading to unpredictable or incorrect results.

A preemptive kernel allows a process to be interrupted during execution, while a non-preemptive kernel only allows processes to be switched when they finish their task, reducing the risk of race conditions.

Login/Signup to comment