Parametric vs Non-parametric Tests

Parametric vs Non-Parametric Tests in Data Analytics

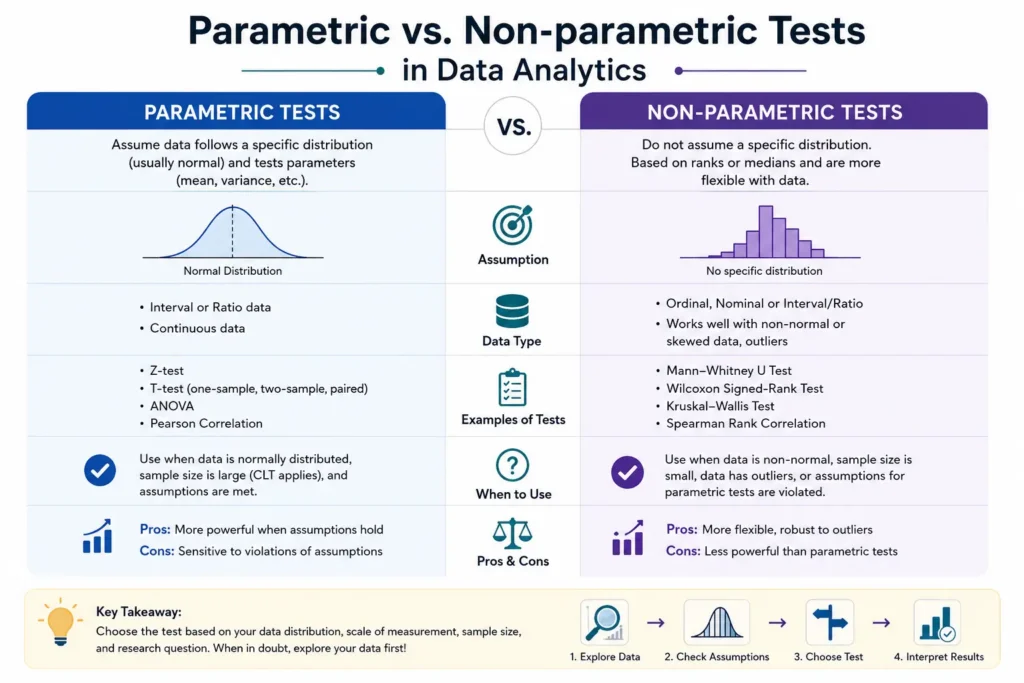

In modern data analytics, selecting the correct statistical method is crucial for drawing meaningful insights. Parametric and non-parametric tests are two major categories of hypothesis testing techniques. Each serves a different purpose depending on the nature of the dataset, its distribution, and sample size. Understanding their differences helps analysts avoid incorrect conclusions and improve analytical precision.

Parametric and non-parametric tests are fundamental statistical tools used in data analytics to analyze data and test hypotheses. Parametric tests assume a specific data distribution (usually normal), while non-parametric tests make fewer assumptions and are more flexible. Choosing the right test improves accuracy, reliability, and decision-making in data-driven environments.

What Are Parametric Tests?

Parametric tests are statistical methods used in data analytics and research to analyze numerical data and make predictions or decisions based on population parameters. These tests rely on specific assumptions about the dataset, especially that the data follows a normal distribution. They are widely used because they provide accurate and powerful results when the assumptions are properly satisfied.

In data analytics, parametric tests help analysts compare means, evaluate relationships, and test hypotheses using structured numerical data. These tests are commonly applied in fields such as business analytics, healthcare, finance, marketing, and machine learning to identify patterns and make data-driven decisions.

The term “parametric” refers to the use of population parameters such as the mean, variance, and standard deviation during statistical analysis. Since these tests use detailed mathematical assumptions, they are generally more efficient and reliable when working with large and well-organized datasets.

Parametric tests are especially useful when:

- The sample data is normally distributed

- The dataset contains numerical values

- Variance across groups is similar

- Sample sizes are sufficiently large

- The data does not contain extreme outliers

Because of their statistical power, parametric tests are considered one of the most important tools in modern data analytics and inferential statistics.

Key Characteristics of Parametric Tests

Assume Normal Distribution of Data

Parametric tests typically assume that the data follows a normal distribution, where values are symmetrically distributed around the mean. This assumption allows statistical calculations to produce more accurate and meaningful results.

Require Interval or Ratio Scale Data

These tests work best with interval or ratio data, where numerical measurements have meaningful distances between values. Examples include height, income, temperature, sales revenue, and exam scores.

More Powerful When Assumptions Are Met

Compared to non-parametric tests, parametric tests usually provide stronger statistical power. This means they are more capable of detecting actual differences or relationships within the data.

Use Mean and Standard Deviation

Parametric methods rely heavily on statistical parameters such as mean and standard deviation. These measures help summarize data and evaluate variations between groups.

Sensitive to Outliers

Extreme values or outliers can significantly affect the accuracy of parametric tests. Since these tests depend on averages, unusual data points may distort the final results.

Suitable for Large Datasets

Parametric tests are highly effective when working with large datasets because larger samples often better satisfy the assumptions of normality and equal variance.

- Z Test

- T Test

- ANOVA (Analysis of Variance)

What Are Non-Parametric Tests?

Non-parametric tests are statistical methods used in data analytics and research that do not require the data to follow a specific distribution. Unlike parametric tests, these methods are more flexible and can work with non-normal, ranked, ordinal, or categorical data. Because of this flexibility, non-parametric tests are widely used in real-world data analysis where datasets may not meet strict statistical assumptions.

These tests are especially useful when working with small sample sizes, survey responses, customer feedback, rankings, or datasets that contain outliers. In modern data analytics, non-parametric tests help analysts identify patterns, compare groups, and make decisions even when the data is irregular or incomplete.

Non-parametric methods are often called “distribution-free tests” because they do not depend on normal distribution assumptions. This makes them highly practical in industries such as healthcare, business analytics, education, marketing, and social sciences.

Key Characteristics of Non-Parametric Tests

Non-parametric tests are popular because of their adaptability and ease of use in complex datasets. Below are some important characteristics:

- No strict distribution assumptions are required

- Suitable for non-normal or skewed data

- Effective for small sample sizes

- Works with ordinal, nominal, or ranked data

- Less sensitive to outliers and extreme values

- Useful when data quality is uncertain

- Easier to apply in real-world scenarios

These characteristics make non-parametric methods a strong alternative when parametric tests cannot be applied properly.

Why Non-Parametric Tests Are Important in Data Analytics

In practical data analytics, datasets often contain irregular patterns, missing values, or non-normal distributions. In such situations, non-parametric tests provide reliable results without depending on strict mathematical assumptions.

Businesses and researchers use non-parametric tests to:

- Analyze customer satisfaction surveys

- Compare user behavior patterns

- Study medical and psychological data

- Evaluate ranked preferences

- Analyze categorical datasets

Because they are flexible and robust, non-parametric tests are widely used in modern data-driven decision-making.

- Mann-Whitney U Test

- Wilcoxon Signed-Rank Test

- Kruskal-Wallis Test

Parametric vs Non-Parametric Tests: Key Differences

| Aspect | Parametric Tests | Non-Parametric Tests |

|---|---|---|

| Data Distribution | Assumes normal distribution | No assumption about distribution |

| Data Type | Interval or ratio data | Ordinal or nominal data |

| Sample Size | Requires larger samples | Works with small samples |

| Statistical Power | Higher (if assumptions are met) | Lower compared to parametric |

| Outlier Sensitivity | Highly sensitive | Less sensitive |

Advantages of Parametric Tests

- Higher statistical power compared to non-parametric tests

- Provides more accurate and reliable results

- Works efficiently with large datasets

- Uses mean and standard deviation for precise analysis

- Suitable for advanced statistical techniques like ANOVA and regression

- Helps identify significant relationships in data

- Widely used in machine learning validation and predictive analytics

Advantages of Non-Parametric Tests

- Do not require normal distribution of data

- Flexible and easy to apply in real-world datasets

- Suitable for small sample sizes

- Works effectively with ranked and categorical data

- Less sensitive to outliers and extreme values

- Useful for skewed or irregular datasets

- Ideal for survey data and customer feedback analysis

-

Choosing between parametric and non-parametric tests depends on several factors:

- Check if your data follows a normal distribution

- Evaluate sample size

- Identify the type of data (numerical or categorical)

- Consider presence of outliers If your data meets assumptions → use parametric tests If not → switch to non-parametric tests

Conclusion

Parametric and non-parametric tests are essential tools in data analytics that serve different purposes. While parametric tests offer higher statistical power under ideal conditions, non parametric tests provide flexibility for real world data scenarios. Mastering both approaches ensures better decision making, improved accuracy, and reliable insights in any data driven project.

Frequently Asked Questions

Answer:

Parametric tests are statistical methods that assume the dataset follows a normal distribution and has equal variance. These tests are commonly used for numerical and continuous data. Non-parametric tests, on the other hand, do not rely on strict assumptions and are suitable for skewed, ranked, or categorical data in data analytics.

Answer:

Parametric tests should be used when the sample size is large, the data is normally distributed, and the variance is consistent across groups. These tests provide highly accurate and powerful statistical results. In data analytics, parametric methods are widely used for predictive analysis, hypothesis testing, and business intelligence reporting.

Answer:

Non-parametric tests are important because real-world datasets often do not meet the assumptions required for parametric testing. These tests work effectively with ordinal data, small sample sizes, and non-normal distributions. Data analysts use non-parametric techniques to handle flexible and practical business datasets.

Answer:

Popular parametric tests include the Z test, T test, and ANOVA, which are used for comparing means and analyzing numerical data. Common non-parametric tests include the Mann-Whitney U test, Wilcoxon Signed-Rank test, and Kruskal-Wallis test. These statistical tools are essential in modern data analytics and research.

Answer:

Parametric tests are generally more powerful and statistically efficient when their assumptions are satisfied. They provide precise estimates and better performance for large datasets. In data analytics, businesses often prefer parametric methods for advanced statistical modeling and machine learning applications.

Answer:

Yes, non-parametric tests are highly suitable for small sample sizes because they do not require normal data distribution. These tests are reliable when dealing with ranked data, survey responses, or limited observations. In data analytics, non-parametric methods help analysts draw meaningful insights even from incomplete or irregular datasets.