Reinforcement Learning

What is Reinforcement Learning ?

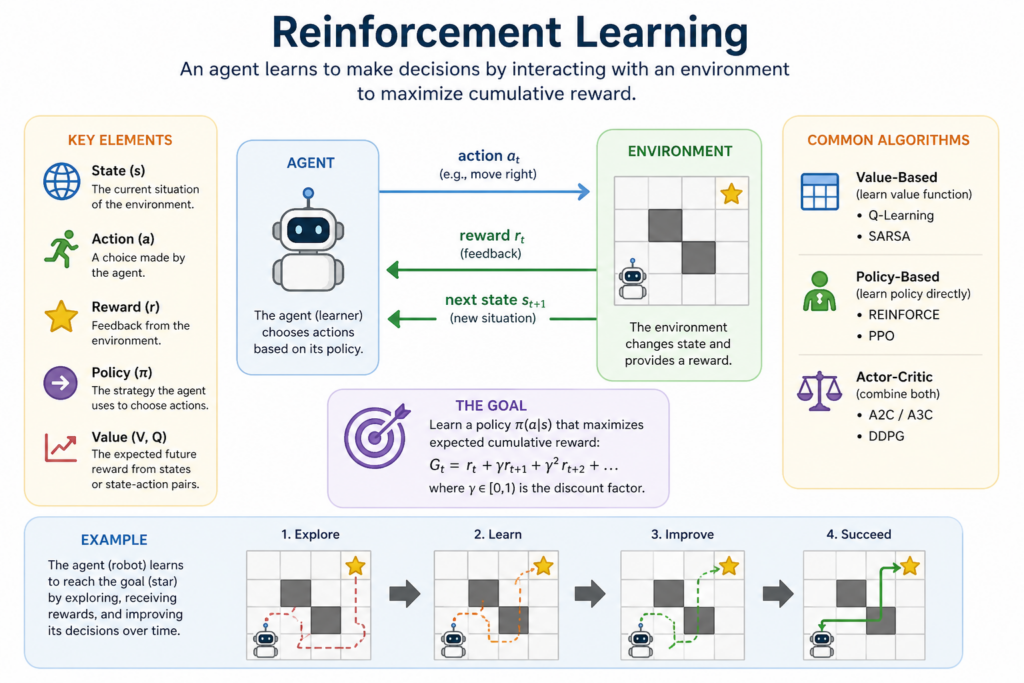

Reinforcement Learning (RL) is a type of machine learning where an agent learns to make decisions by interacting with an environment. The agent receives rewards or penalties based on its actions and aims to maximize cumulative rewards over time.

Now, what is Reinforcement Learning in machine learning? It is a widely applied in various fields such as robotics, game playing, autonomous driving, and financial modeling. By using techniques like Q-learning and deep reinforcement learning, RL can handle both simple and complex environments, making it a powerful tool for decision-making and adaptive learning.

In this article, we will explore how Reinforcement Learning works, its key concepts, and why it is one of the most reliable approaches for solving dynamic and interactive problems.

Understanding Reinforcement Learning

Reinforcement Learning (RL) is a type of machine learning that focuses on how agents take actions in an environment to maximize cumulative rewards.

Unlike supervised learning, where models learn from labeled data, RL relies on an agent exploring an environment and learning from trial and error.

RL is widely used in robotics, game playing, self-driving cars, and even finance.

Some famous applications include AlphaGo, OpenAI Gym, and DeepMind’s reinforcement learning models.

How Reinforcement Learning Works

Reinforcement Learning operates using the following core components:

Agent – The learning entity that interacts with the environment.

Environment – The external system where the agent operates.

State (S) – A representation of the environment at a specific moment.

Action (A) – The set of possible actions the agent can take.

Reward (R) – Feedback received after taking an action; can be positive (reinforcing good actions) or negative (discouraging poor actions).

Policy (π) – A strategy that the agent follows to determine its actions.

Value Function (V) – The expected long-term reward for being in a given state.

Q-Function (Q) – The expected reward for taking a specific action in a given state.

The goal of RL is for the agent to learn the best policy to maximize long-term rewards by balancing exploration (trying new actions) and exploitation (choosing the best-known action).

Types of Reinforcement Learning

1. Model-Free vs. Model-Based RL

Model-Free RL – The agent learns directly from interactions with the environment without an explicit model of it (e.g., Q-Learning, Policy Gradient methods).

Model-Based RL – The agent builds a model of the environment and uses it to plan actions (e.g., Dynamic Programming).

2. Value-Based vs. Policy-Based Methods

Value-Based RL – Focuses on learning the value of states and actions (e.g., Q-Learning, SARSA).

Policy-Based RL – Learns a policy directly without relying on value functions (e.g., REINFORCE, Actor-Critic methods).

Main Algorithms in Reinforcement Learning

1. Q-Learning (Value-Based Method)

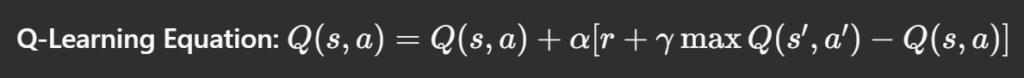

Q-Learning is an off-policy RL algorithm that uses the Bellman equation to update Q-values for state-action pairs.

where:

- α is the learning rate.

- γis the discount factor.

- s, a, r, s’ represent the state, action, reward, and next state, respectively.

2. Deep Q-Networks (DQN)

Deep Q-Networks (DQN) extend Q-Learning by using neural networks to approximate Q-values, allowing RL to work with high-dimensional data like images (e.g., in Atari games).

3. Policy Gradient Methods

Instead of estimating value functions, policy gradient methods learn policies directly by optimizing reward-based objectives.

REINFORCE Algorithm – A simple policy gradient method that updates policies based on rewards received.

Actor-Critic Methods – Combine value-based and policy-based methods for more efficient learning.

Exploration-Exploitation Tradeoff in Reinforcement Learning

The exploration-exploitation tradeoff is a fundamental challenge in Reinforcement Learning (RL). It arises because an RL agent must decide whether to:

Explore new actions to discover potentially better rewards in the long run.

Exploit known actions that have already yielded high rewards.

Why is This Tradeoff Important?

If an agent only exploits, it might get stuck in a suboptimal policy, missing out on better rewards. On the other hand, if it only explores, it may waste time on less rewarding actions instead of capitalizing on the best-known choices.

Strategies to Balance Exploration and Exploitation

Epsilon-Greedy Algorithm – With probability ϵ\epsilonϵ, the agent explores randomly; otherwise, it exploits the best-known action.

Softmax Exploration – Uses a probability distribution (like Boltzmann distribution) to choose actions based on their expected rewards.

Upper Confidence Bound (UCB) – Selects actions based on an optimistic estimate of their potential rewards.

Thompson Sampling – A Bayesian approach that balances exploration and exploitation based on probability distributions.

In short:

In RL, an agent must balance:

Exploration – Trying new actions to discover potentially better policies.

Exploitation – Using known actions that yield high rewards.

Common strategies to balance this tradeoff include:

Epsilon-Greedy Method – The agent mostly exploits but explores with a small probability (epsilon, ).

Boltzmann Exploration – Actions are chosen probabilistically based on their estimated value.

Difference between Exploration and Exploitation in RL

| Feature | Exploration | Exploitation |

|---|---|---|

| Definition | Trying new actions to discover better rewards | Choosing the best-known action for immediate reward |

| Objective | To gather more information about the environment | To maximize immediate rewards based on known information |

| Risk | High risk, as rewards are uncertain | Low risk, but may miss better long-term rewards |

| Short-term vs. Long-term | Focuses on long-term gains by learning more | Focuses on short-term gains using existing knowledge |

| Example in Reinforcement Learning | A robot testing new paths to find the shortest route | A robot always choosing the current shortest-known route |

| Example in Real Life | Trying a new restaurant to see if it’s better than usual choices | Going to a favorite restaurant because it’s a safe choice |

| Algorithms Handling Tradeoff | Encouraged using techniques like Epsilon-Greedy, UCB, and Thompson Sampling | Utilized when an optimal policy is nearly determined |

Implementing Reinforcement Learning in Python

Here’s a simple implementation of Q-Learning using OpenAI Gym:

import gym

import numpy as np

env = gym.make("FrozenLake-v1", is_slippery=False)

n_actions = env.action_space.n

n_states = env.observation_space.n

gamma = 0.95 # Discount factor

alpha = 0.8 # Learning rate

epsilon = 0.1 # Exploration factor

q_table = np.zeros((n_states, n_actions))

for episode in range(1000):

state = env.reset()[0]

done = False

while not done:

if np.random.uniform(0, 1) < epsilon:

action = env.action_space.sample() # Explore

else:

action = np.argmax(q_table[state, :]) # Exploit

next_state, reward, done, truncated, _ = env.step(action)

q_table[state, action] = q_table[state, action] + alpha * (

reward + gamma * np.max(q_table[next_state, :]) - q_table[state, action]

)

state = next_state

print("Trained Q-Table:")

print(q_table) Explanation of Code:

Initialize the environment – Uses OpenAI Gym’s FrozenLake environment.

Define parameters – Learning rate, discount factor, and exploration rate.

Train the Q-table – Updates Q-values using the Q-learning algorithm.

Make decisions – The agent explores or exploits based on epsilon.

Applications of Reinforcement Learning

Reinforcement Learning is used in many real-world applications, including:

Game AI – AlphaGo, OpenAI Five, and DeepMind’s RL models mastering complex games.

Robotics – Training robots to walk, grasp objects, or navigate environments.

Self-Driving Cars – RL helps in navigation and decision-making.

Finance & Trading – Automated stock trading strategies using RL-based models.

Healthcare – Personalized treatment recommendations and drug discovery.

Challenges in Reinforcement Learning

While RL is powerful, it comes with challenges:

Sample Inefficiency – Requires large amounts of data to learn effectively.

High Computational Costs – Training RL models can be computationally expensive.

Delayed Rewards – Actions taken may have long-term impacts, making it hard to learn optimal policies.

Instability & Convergence Issues – Deep RL models, like DQNs, can be unstable if hyperparameters are not carefully tuned.

Conclusion:

Reinforcement Learning is a powerful machine learning approach for decision-making and control tasks. It enables machines to learn through trial and error, optimizing rewards over time. While RL has challenges like computational complexity and data efficiency, advancements in deep learning continue to enhance its potential for real world applications.

Frequently Asked Questions

Answer:

Reinforcement Learning (RL) is a type of machine learning where an agent learns by interacting with an environment. It improves its decisions by receiving rewards or penalties based on its actions. Over time, the agent learns the best strategy to maximize rewards.

Answer:

Reinforcement Learning works through a cycle of state, action, reward, and feedback. The agent takes actions in an environment, receives rewards, and updates its policy accordingly. This trial-and-error process helps it learn optimal behavior.

Answer:

The main components of Reinforcement Learning include the agent, environment, actions, rewards, and policy. These elements work together to help the agent learn decision-making strategies. Value functions are also used to evaluate long-term rewards.

Answer:

Reinforcement Learning is widely used in robotics, gaming, recommendation systems, and self-driving cars. It also plays a role in finance, healthcare, and automation systems. RL helps machines make smarter, data-driven decisions in dynamic environments.

Answer:

Reinforcement Learning learns through rewards and interactions, while supervised learning relies on labeled data. RL focuses on decision-making over time, whereas supervised learning predicts outputs from given inputs. RL is more suitable for dynamic and sequential problems.

Answer:

Reinforcement Learning is important because it enables machines to learn optimal actions without explicit instructions. It helps in solving complex problems where decisions must adapt over time. This makes RL essential for advanced AI systems and automation.